Skin Lesion Classification for Melanoma Detection

Project completed for the 2025 LUMEN Data Science competition; our solution won the first place. Developed models for classifying melanoma in skin lesion images, with optimization focused on fairness regarding skin color.

Exploring In-Context Learning and Efficient LoRA Fine-Tuning of Gemma3 Models versus BERT Fine-Tuning in Low-Resource Environments

Comparing Gemma3 models performance (270m,1b and 4b) on classification, reasoning and QA suite using ICL vs LoRA finetuning. Comparing performance to BERT trained on classification and QA.

Foundational Models for Zero-Shot Image Retrieval - Place Recognition study

Comparing visual-features-only approaches with DINOv3, CLIP, StreetCLIP, and Perception Encoder in zero-shot evaluation.

The best performance was 46.4% Recall@1 when using GPS-aided retrieval with DINOv3-H/16+ and CLIP-B/32.

Using DINOv3-H/16+ alone achieved 44.0%, while combining DINOv3-H/16+ and CLIP-B/32 reached 44.8% Recall@1.

Work experience

R&D Multimodal Deep Learning Engineer @ Arkensight

December 2024 - September 2025

Conducting research and development on open vocabulary DETR-style object detection.

Achieved (near) SOTA results on 20+ object detection benchmarks with our foundation model.

Working on CLIP-based re-identification and fine-grained visual classification. 87% mAP on MSMT17

Modeling dense one-to-one (O2O) loss signals for end-to-end object re-identification and multi-object tracking.

Research Internship @ Google | FER

March 2025 - July 2025

Working as a research assistant on international project by Google. Assigned a task of multi-task multi-class image

classification of road safety attributes. Achieved 64.90% mF1) Mentor: prof.dr.sc. Siniša Šegvić

Computer Vision Research Intern @ Visage Technologies

August 2024 - October 2024

Evaluated Masked Image Modeling pretraining efficiency on ConvNeXt-V2 models for classification and detection. Improved training speed by up to 10 times on a single GPU and achieved SOTA results on ImageNet100.

Data Science Intern @ mStart, Fortenova

July 2024 - August 2024

Assigned an individual project focused on demand forecasting for the largest grocery retail supplier in Croatia.

R&D Junior Deep Learning Engineer @ Ericsson

Nov 2022 - July 2024

Contributed to a object detection of fish cages on Sentinel-2 and Sentinel-3 satellite imagery. Developed a workflow that included data extraction from the CloudFerro database, transformation, ingestion, and preprocessing. Implemented a Faster R-CNN model, achieving an F1 score of 87% and a recall of 95%.

Data Science Intern | Ericsson Summer Camp

July 2022 - September 2024

Developed regression algorithms for remote sensing analysis of the Adriatic Sea using Sentinel-3 satellite images.

Honors, awards and certificates

Rector's award - Methods for Assessing and Improving the Fairness of Deep Classifiers

November 2025

Mediterranean Machine Learning Summer School (M2L) — Accepted participant

September 2025

1st place, LUMEN data science competition 2025

May 2025

2nd place at HACKL – hackathon for open city of Zagreb

April 2025

ERASMUS+ scholarship for academic year 2025./2026.

March 2025

Sofascore Data Science Academy

March 2024 – June 2024

4th Place @ Algotrade Hackathon 2024

April 2024

STEM Scholarship, Top 1% High School Students

September 2021

3rd Place, National Philosophy Competition

April 2021

All projects

Foundational Models for Zero-Shot Image Retrieval - Place Recognition study

Comparing visual-features-only approaches with DINOv3, CLIP, StreetCLIP, and Perception Encoder in zero-shot evaluation.

The best performance was 46.4% Recall@1 when using GPS-aided retrieval with DINOv3-H/16+ and CLIP-B/32.

Using DINOv3-H/16+ alone achieved 44.0%, while combining DINOv3-H/16+ and CLIP-B/32 reached 44.8% Recall@1.

Exploring In-Context Learning and Efficient LoRA Fine-Tuning of Gemma3 Models versus BERT Fine-Tuning in Low-Resource Environments

Comparing Gemma3 models performance (270m,1b and 4b) on classification, reasoning and QA suite using ICL vs LoRA finetuning. Comparing performance to BERT trained on classification and QA.

Multitask Learning, Dynamic Loss Balancing, and Sequential Enhancement for Road Safety Assessment

Automated road safety assessment by combining modern self-supervised vision transformers, dynamic loss balancing, and sequence-based refinement.Evaluation of large-scale foundation models such as DINOv2, ConvNeXt, and OneFormer on the iRAP-BiH dataset, addressing class imbalance through adaptive loss weighting and multi-task optimization.Integrating local frame-level recognition with Bi-LSTM temporal adjustment to achieve 64.90% macroF1

Skin Lesion Classification for Melanoma Detection

Project completed for the 2025 LUMEN Data Science competition; our solution won the first place. Developed models for classifying melanoma in skin lesion images, with optimization focused on fairness regarding skin color.

Automatic Label Error Detection Using Uncertainty Quantification

The proposed method introduces a approach for detecting label errors in semantic segmentation by analyzing connected components within predicted segmentations. Each potential label error is evaluated using a meta-classifier trained on handcrafted features such as entropy, probability differences, intersection over union (IoU), component size, and geometric distances.

Semantic Segmentation of Satellite Images

Under the mentorship of Prof. Dr. Siniša Segvić, developed algorithms for semantic classification of scenes in the DeepGlobe Land Cover satellite dataset using SwiftNet and MagNet, achieving a mean Intersection over Union (mIoU) of 73%

Cyber (Backdoor) Attacks and Defenses on Deep Learning Models

Team leader on a student project under mentorship of prof.dr.sc. Siniša Šegvić

We have implemented Bad Nets and Data Poisoning attacks,along with Neural Cleanse, Fine-Pruning and Jittering defenses, while using Efficient Net B0 and Resnet18.

Low-Rank Adaptation (LoRA) of Gemma-3 4-bit for Profanity Removal

Gemma-3, by default, would not follow instructions to remove profanities, as it was trained to never use them. Developed a small annotated dataset containing profanities and achieved 90% accuracy over 10 runs after LoRA finetuning.

Polyphonic Audio Classification

This project focused on polyphonic audio classification, specifically the detection of multiple instruments in audio recordings.

It utilizes a Convolutional Neural Network (CNN) model trained on the IRMAS dataset.

Final model, to which augmented data was sent, achieved Hamming accuracy of 90%

Foundational Models for Zero-Shot Image Retrieval - Place Recognition study

Summary

This project explores non-gradient-based image retrieval on the MSLS London dataset, comparing traditional Bag-of-Words (BoW) methods with zero-shot linear probing of pretrained vision foundation models like DINOv3, Perception Encoder and CLIP.Linear evaluation of DINOv3-H/16+ model achieved a significantly higher performance (44.0% Recall@1) than the best SIFT BoW approach (13.2% Recall@1), and ablations showed GeM pooling outperformed other pooling strategies.The highest performance was achieved by using GPS in a centroid-based retrieval approach using a combination of DINOv3-H/16+ and CLIP-B/32 features, resulting in a final best Recall@1 of 46.4%.

About the problem

Image retrieval is a computer vision task of retrieving relevant images from a base of visual images for a given query image, a task relevant for localization, autonomous driving, and reconstruction. For that purpose, we are using the Mapillary Street Level Sequences (MSLS), specifically the London imagery subset, which consists of 1000 gallery and 500 query images.

Baseline

Our baseline is bag-of-(visual)-words (BoW) approach where we are using ORB, SIFT and SURF features. We go over each image, extract features, collect all features to create visual vocabulary, cluster those and create histogram of centroids as a feature representation for a inference query image.

Zero-shot linear evaluation of vision backbones

Our setup is simple: we freeze the model, extract features for gallery and query images,apply pooling, and perform image-to-image similarity based retrieval using cosine distance.For that purpose we are using DINOv3, CLIP, StreetCLIP and Perception Encoder vision backbones.

Centroid-based Retrieval

This approach incorporates GPS-annotated data alongside visual features for image retrieval, leveraging location information in the gallery to guide search even when query images lack GPS data. By clustering gallery features based on GPS coordinates and using centroid representations, retrieval can be performed in a location-aware manner.Approach A: After identifying the most similar centroid to the query, retrieval is first conducted among the images inside that centroid’s cluster. If the required number of retrieved images exceeds the available images in that cluster, additional images are then retrieved from other clusters.Approach B: Retrieval uses a weighted similarity score combining similarity to the matched centroid and similarity to individual gallery images. The parameter α ∈ [0, 1] controls the balance between centroid-level location cues and direct feature similarity.

Results

Let's start with BoW approach. Fixing all hyperparamters, we can see that using SIFT features had been the most promising approach.

We performed hyperparameter search to find optimal setting for our BoW approach using SIFT features. Best approach yields 13.2% recall@1, 4.4% gain over baseline.

As for linear evaluation, next table shows performances across all architectures. We can observe that for single-backbone approach, DINOv3/16+ had the highest Recall@1 of 44.0%, while using combination of DINOv3/16+ and CLIP-B/32 yielded only slightly better perforamnce of 44.8% Recall@1.Such a small improvement can be attributed to the fact that both extract general, coarse-grained features, with as it seems similar semantic properties.

Using centroid-based approach in multi-backbone setup achieved highest score of 46.4% Recall@1.

As for pooling ablation, we found out that GeM pooling, across acrhitectures, on average perfoms the best, with 5.13% gain over CLS token representation.

Conclusions

To conclude, our results show that linear evaluation of pretrained vision backbones is an effective non-gradient-based approach, where combining two models offers only marginal improvement due to the coarse granularity of encoded features. The best performance is achieved using the centroid-based GPS-aided approach with two strong backbones, confirming the value of leveraging location information in retrieval.

Done while @University of Twente with Onat Akca and Rushat Gabhane

Exploring In-Context Learning and Efficient LoRA Fine-Tuning of Gemma3 Models versus BERT Fine-Tuning in Low-Resource Environments

Summary

Large language models (LLMs) can be adapted to new domains through supervised fine-tuning (SFT), parameter-efficient fine-tuning (PEFT), or in-context learning (ICL).We explore the trade-offs between these approaches in low-resource settings, comparing LoRA-adapted Gemma3 models (270M, 1B, 4B parameters) against fully fine-tuned BERT baselines across classification, question answering, and reasoning tasks.We report three main findings:

(1) ICL effectiveness scales with model size, with larger models matching or surpassing fine-tuned smaller ones;

(2) Instruction-tuned base models preserve cross-task generalization, while LoRA fine-tuning boosts in-domain accuracy but reduces reasoning ability by up to 20%; and

(3) ICL increases inference latency by 26–40% as context grows, whereas LoRA adapters add negligible overhead. BERT remains strongest for single-task performance but lacks cross-task transfer.

What are we doing here

We assume low-GPU production constraints. In such, we compare parameter-efficient fine-tuning with LoRA to in-context learning (ICL), examining how each approach affects task performance and generalization.We fine-tune a base model on either classification or QA data and evaluate the resulting systems across three domains: text classification, question answering, and commonsense reasoning.The goal is to understand the trade-offs between improving performance on a target task versus preserving broader reasoning capabilities, as well as the practical differences in latency, resource usage, and deployment cost between LoRA-adapted models and ICL prompting strategies.We additionally benchmark these variants against a BERT baseline to contextualize efficiency and performance outcomes.

What is in-context learning?

In-context learning refers to providing the model with examples directly in the prompt. The model

is given k demonstration examples written in a natural language template, followed by a new input,

and is asked to produce the output. The model is expected to infer the pattern from the demonstrations

and apply it to the new case.This approach requires no parameter updates and instead relies on the generalization ability of the pretrained model. In general, we assume that larger models perform better under this setting due to their stronger internal representations.

What is LoRA?

A very good blog post on LoRA can be found here: https://thinkingmachines.ai/blog/lora/LoRA is a form of fine-tuning that approximates optimal weight updates by decomposing the original weight matrix into two smaller matrices with an inner dimension r. As r increases, LoRA converges to full fine-tuning. For smaller ranks, it acts as a more efficient approximation, since only the smaller matrices are updated for the task. During training, these matrices learn task-specific weight deltas based on the loss signal. In the end, the LoRA adapter is simply this additional task-specific matrix that can be added to the base model during inference.

Fine-tuning BERT

In this setup, the pretrained BERT parameters are directly updated to optimize performance for the target task, rather than using adapter layers or in-context prompting.For classification (AG News), we fine-tune BERT-base-uncased, attaching a classification layer and optimizing the model to predict one of four news categories.

For extractive question answering (SQuAD v2), we fine-tune DistilBERT-base-uncased with a standard span-prediction head that selects start and end token positions for the answer.

Methodology

https://deepmind.google/models/gemma/gemma-3/

We use the Gemma3 model family for our experiments, specifically the 270M, 1B, and 4B variants

We fine-tune Gemma3 models on one target dataset from either the classification (AG News) or question-answering (SQuAD v2) suite, and then evaluate each resulting model across all three task suites (classification, QA, and reasoning) to assess cross-domain transfer and potential trade-offs in generalization. For comparison, we include encoder-only BERT baselines, which are fully fine-tuned separately for each task and evaluated only within their respective domains.

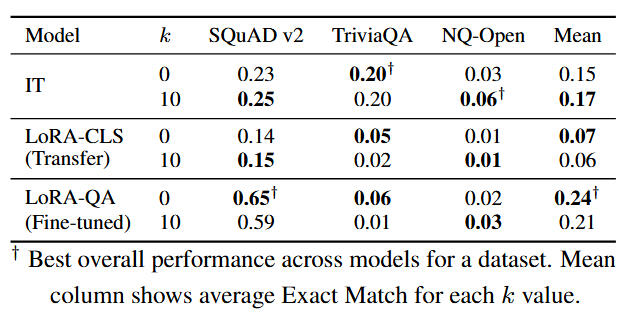

Results - Gemma3-270m-it

Task-specific LoRA fine-tuning improves performance within the fine-tuned domain, but leads to measurable degradation on tasks outside that domain, particularly in reasoning.

While in-context learning with additional demonstrations can boost the base model, it does not fully recover the cross-task generalization lost during domain-specific adaptation.

Results - Gemma3-1b-it

For Gemma3-1B-it, adding more in-context ICL examples improves the base model(+4% on classification, +2% on QA, and +4% on reasoning), but these gains do not carry over to the LoRA-fine-tuned variants, indicating that even small parameter updates reduce generalization. Although LoRA-QA improves QA performance in-domain, the base model outperforms both LoRA-CLS and LoRA-QA on the reasoning suite, and the smaller 270M model achieves higher post-fine-tuning performance than the 1B model, suggesting that carefully tuned smaller models can match or exceed larger ones in low-resource fine-tuning settings.

Results - Gemma3-4b-it

For Gemma3-4B-it, increasing in-context examples yields the strongest gains across all model sizes, with k = 10 improving performance by approximately +12% on classification, +13% on QA, and +4% on reasoning, enabling the base model to match or exceed the performance of smaller LoRA-fine-tuned variants. While the 4B model does not outperform LoRA-QA on SQuAD-v2 specifically, it achieves the highest average QA performance across datasets and consistently improves across all suites, indicating that effective in-context learning benefits from sufficient model capacity.

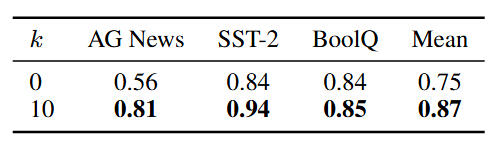

Results - BERT

Full fine-tuning BERT-base-uncased on AG News yields strong in-domain performance (93.7% accuracy), outperforming all Gemma3 variants regardless of LoRA or ICL. However, this does not generalize: performance on SST-2, BoolQ, and all reasoning tasks is near random, indicating that BERT fine-tuned on a single dataset is suitable only for single-task production pipelines.

Results - DistillBERT

DistilBERT-base-uncased fine-tuned on SQuAD v2 achieves strong in-domain performance (67% EM), outperforming both Gemma3-270M and Gemma3-1B LoRA variants on this dataset. However, performance drops to near zero on TriviaQA and NQ-Open, indicating that this fine-tuning provides no cross-dataset generalization and remains effective only for the specific dataset it was trained on.

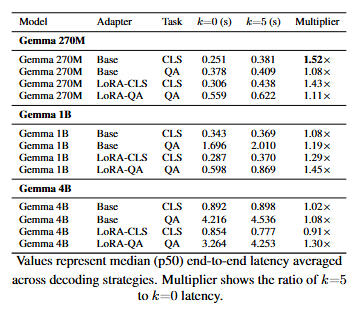

Results - Inference metrics

DistilBERT-base-uncased offers substantially lower inference cost than all Gemma3 variants, achieving TTFT ≈ 8.1 ms, TPOT ≈ 0.3 ms, and throughput ≈ 3837 tps, which is several orders of magnitude faster than Gemma3-270M and Gemma3-4B. For Gemma3 models, LoRA adaptation has negligible runtime impact, with changes in TPOT and throughput remaining within ±10% of the base model. In contrast,in-context learning introduces notable overhead, where increasing k to 25 raises TPOT by 26–40% and reduces throughput by 21–28%. Overall, efficiency is dominated by context length rather than LoRA, and BERT remains the most cost-effective option for single-task inference workloads.

Done while @University of Twente with Sounic Akkaraju

Multitask Learning, Dynamic Loss Balancing, and Sequential Enhancement for Road Safety Assessment

Introduction

In this work, I recreate and extend previous approaches to automated road safety attribute assessment by applying advanced representation learning models in computer vision.Instead of the previously used low-capacity ResNet18 backbone, I explore the performance of self-supervised visual transformer models (TIPS, DINOv2) as well as ConvNeXt convolutional architectures pretrained on large-scale road scene datasets.By using linear evaluation and fine-tuning, alongside additional ablation studies on model optimization, the goal is to achieve more accurate and scalable automation of road infrastructure safety assessment.

Challenge and intro to iRAP-BiH dataset

The dataset represents a multi-class, multi-label road attribute classification problem, where each 10-meter road segment is annotated with multiple safety and infrastructure attributes defined by the iRAP standard.The data consists of 226,449 geo-referenced images extracted from high-resolution (2704×2028, 25 FPS) video recorded along 2,300 km of public roads in Bosnia and Herzegovina, with each segment represented by a 384×288 frame. The dataset is split into training (214,073), validation (5,813), and test (6,563) sets, ensuring that segments from the same road do not cross splits, which prevents temporal leakage in sequence models.A key challenge is label sparsity and class imbalance: while some attributes (e.g., Area Type, Carriageway Label) are well represented, others (e.g., pedestrian flow indicators, motorcycle flow) occur infrequently, making them harder to learn. This imbalance leads to large performance variation across attributes, as seen in accuracy differences between models.

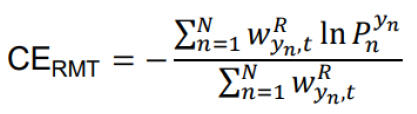

Recall based cross-entropy

The iRAP-BIH dataset exhibits strong class imbalance both within individual classification tasks (some classes appear far more frequently than others) and between tasks (some attributes are much harder and less frequent overall). Since this is a multi-task, multi-head classification setup, the total loss is computed as the average across all attribute-specific losses. This means that tasks containing rare or hard-to-recognize classes can disproportionately dominate optimization, causing the model to overfit these attributes.To address the imbalance, the standard cross-entropy loss can be modified by applying inverse-frequency weighting, where each class is assigned a weight proportional to the inverse of its frequency. However, a static weighting scheme can lead to instability and false positives for rare classes.

Therefore, prior work introduces dynamic loss weighting, where class weights are adjusted based on the model’s ongoing performance (response rate) per class. Finally, to prevent any single task from overwhelming the multi-task objective, a normalized per-task loss formulation is used, ensuring balanced optimization across all tasks regardless of label distribution.

Methodology - local recognition

The approach combines local visual recognition with short-range temporal context. For each road segment at time T, the model also processes frames from T–1 and T–4, allowing it to capture gradual changes in the scene. A ResNet18 backbone pretrained on semantic segmentation extracts visual features, which are then passed through Spatial Pyramid Pooling to obtain a multi-scale, fixed-dimensional representation. For each of the 43 attributes, the model applies attribute-specific attention, where learned query vectors highlight the most relevant spatial features. The representations from the three time steps are then concatenated and fed into separate classification heads, one per attribute.

Methodology - sequential adjustment

In the second stage, the model refines its initial, frame-level predictions by incorporating broader temporal context. Instead of relying on hand-crafted temporal rules, this phase uses a bidirectional LSTM to learn patterns across a sequence of 21 consecutive segments (10 before and 10 after the current frame), allowing the model to leverage both past and future information. Each attribute is processed by its own Bi-LSTM module, which receives a combination of the original logits and embedded class representations. After the temporal sequence is encoded, a fully connected layer with softmax produces the final refined prediction. Training uses dynamically weighted cross-entropy to account for class imbalance across attributes.

Methodology - models used for linear probing

DINOv2 is a self-supervised visual transformer trained using a teacher–student framework that learns semantically rich visual representations without labeled data, making it a strong foundation model for tasks like classification and segmentation.OneFormer is a unified transformer architecture that performs semantic, instance, and panoptic segmentation within a single model, using task tokens to adapt dynamically to each segmentation objective while maintaining high generalization and efficiency.UniDepthV2 is a versatile monocular depth estimation model capable of supervised, semi-supervised, and self-supervised learning, leveraging a depth confidence scale and feature tokenization to integrate both local and global geometric cues.The image below showcases features extracted on one image from the iRAP-BIH dataset.

Results - DINOv2 ViT-b/14 - local recognition

We are not fine-tuning models here, rather we freeze the model and use it as a feature extractor. Once we have all our features, we finetune a MLP head on top of those for our downstream task.The DINOv2 ViT-14/b model was evaluated through a series of ablation studies involving optimizer choice, learning rate, network capacity, and normalization strategies using frozen feature representations. Results showed that Adam consistently outperformed SGD, and the best configuration used a single fully connected layer with a learning rate of 1e-2, achieving the highest Macro F1 across validation and test sets. Further tuning indicated that batch size 32, weight decay 1e-3, scheduler 0.8, and ImageNet normalization with register usage performed best.

Results - OneFormer - local recognition

With OneFormer we had only one series of evaluations and we did not dig deep into various options we had (hyperparamters tuning, pretraining checkpoints, etc.).Here are results of linear probing of OneFormer ConvNeXtV2-L model pretrained on Vistas dataset on resolution of 384x384. It showed lower performance than DINOv2-b/14 model.

Results - sequential adjustment

Here we compare linear probing od DINOv2-b/14 model vs full fine-tuning of Resnet18 model trained with pyramid upscaling. Results show proximity of both approaches, suggesting, a bit unexpectedly, that smaller model was able to match the performance of larger foundation model.

Conclusions

Several challenges identified in earlier studies remain unresolved even with newer classification models. The annotation of safety features is inherently subjective, as some road elements should only be labeled upon first appearance, which local detection alone cannot capture, but sequence alignment helps address this issue effectively. In this work, linear evaluation of the DINOv2 ViT-14/b model achieved competitive but not superior results compared to ResNet18, while fine-tuning the entire model proved suboptimal; future experiments will therefore explore LoRA-based adaptation, partial fine-tuning, and transformer architectures such as OneFormer, UniDepth, ViT, and Swin, especially given that sequential alignment improved macro F1 by 7.67 and 9.07 points for ResNet18 and DINOv2 respectively.

Skin Lesion Classification for Melanoma Detection

- See on Github

- Project documentation

- Technical Documentation

- Poster (in Croatian)

- Model pth on HuggingFace

Introduction

Melanoma is a highly aggressive form of skin cancer arising from uncontrolled melanocyte growth, responsible for most skin cancer–related deaths despite being less common than other skin cancers. Because melanoma can spread rapidly through the body, early detection is critical, and current diagnosis heavily relies on dermatologists visually assessing skin lesions.However, these manual methods are time-consuming and subjective.When developing such diagnostic models, it is essential to ensure fairness and reliability across demographic groups to prevent systematic bias. ML systems can introduce several types of harms, including allocation harms (unequal treatment opportunities), quality-of-service harms (unequal model accuracy), and stereotyping harms (reinforcing biased associations).To evaluate fairness, parity constraints such as demographic parity, equalized odds, and equal opportunity are applied, with corresponding disparity metrics, which afre measuring performance gaps between groups, all used to quantify bias. In melanoma detection, allocation harms are most critical, as false negatives (missed malignancies) pose far greater risk than false positives, making demographic parity the priority fairness objective.

Fairness of machine leaarning (ML) systems

Bias is defined as a systematic error in decision-making processes that results in unfair outcomes.ML algorithms may exhibit several types of biases. Bias can be introduced through the data, model design, user interactions, etc. In this work, we focus primarily on data-induced bias.Our ISIC 2020 dataset exhibits a severe underrepresentation of darker skin types, as well as an imbalance between malignant and benign class examples. Therefore, our objective is not only to achieve strong performance on the test dataset with respect to the target metric, but also to develop a model that actively mitigates bias.In our work, we aimed to train a model that is fair across skin tone groups by employing data

augmentation techniques, preprocessing strategies, custom loss functions, intelligent sampling

approaches, and skin tone-aware training procedures.

Domain-aware training

Domain-aware machine learning refers to the process of integrating knowledge or bias about

the specific characteristics or distribution shifts of a given domain into the model training

pipeline.

To successfully enable such training, the model’s classification head, loss function, and

evaluation procedure often need to be adjusted.

In our case, the goal is not only to train a high-performing classifier according to an arbitrary

target metric (e.g., recall), but also to ensure the model performs equitably well across different

skin color groups. In other words, we aim to build a classifier that is domain-aware, with

domains corresponding to skin color categories.

This implies two key assumptions: first, that we either know a priori or can infer the skin

color group of each image; and second, that our baseline model does not perform equally

across these groups.

Fairness through awareness

Instead of ignoring sensitive attributes, this approach explicitly encodes domain information, in this case skin color groups, within the model to directly mitigate bias. By conditioning the classification head on domain labels and using multiple domain-specific classifiers (such a classifier is referred to as an ND-way classifier, where N is the number of classes and D is the number of domains), the model can maintain balanced performance across demographic groups. Additionally, this setup enables inverse frequency weighting at the class-domain level, improving fairness and handling class imbalance more precisely.

Prior shift inference

Assume we have an ND-way classifier with a shared softmax layer. When interpreting model

outputs as probabilities, a test-time inference strategy that suppresses class-domain correlation,

which we refer to as domain-discriminative inference, can be formulated as:

In contrast to an ND-way classifier, a per-domain N-way classifier assumes domain-independent

inference, which can be expressed as:

ISIC 2020 dataset

I did jump right into fairness without even introducing dataset. ISIC 2020 dtaset consists of 33,126 annotated training examples and 10,982 test images without annotations. These images depict skin lesions from over 2,000 patients, with both malignant and benign cases represented.As we are developing a skin-tone aware model, first thing we needed to do was to estimate skin-tone. To estimate skin tone, each image undergoes preprocessing to isolate skin regions by removing hair and lesions using DullRazor, enhancing contrast with CLAHE, and applying morphological Otsu thresholding to exclude pigmentation. The remaining skin pixels are then clustered to identify the dominant skin color, represented in the CIELAB color space, from which the Individual Typology Angle (ITA) is calculated using the lightness (L) and blue–yellow (b) components. The resulting continuous ITA values are finally discretized into six skin tone groups, enabling fairness analysis across tone-based demographic subgroups.

This is grouping based on ITA angle that we used

And here we can see an example of an skin-tone estimation process

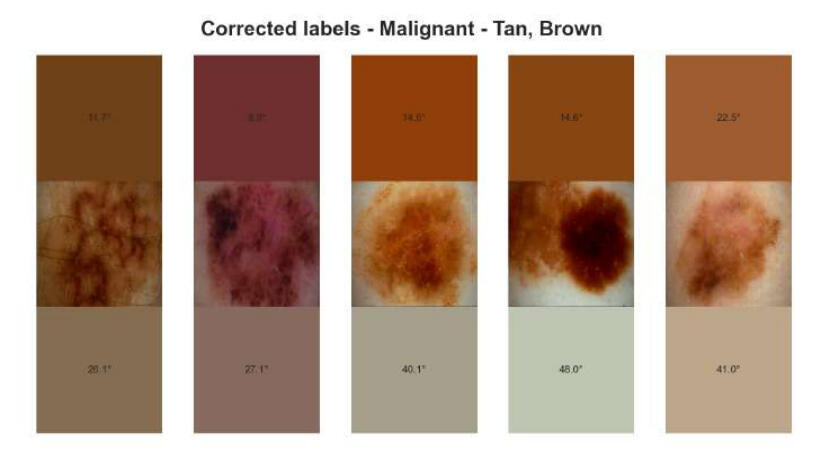

Estimation was not completely error-free, so we did evaluate all malignant cases to see if there were errors. On the next image you can observe the labels we changed after manual inspection.

Some image had artifacts ( e.g. pen markings, hair and dark corners). Such issues could not be solved in this case, and they did pose problem for a model to learn skip-connections when classifying such examples, e.g. if the model learns to correlate the presence of pen markings with the malignant class.

Data preprocessing methods

Segmentation masks

The segmentation procedure is image processing-based and includes contrast increase with CLAHE, hair removal using DullRazor and morphologically expanded Otsu thresholding to remove skin pigmentations

It is worth noting that our threshold segmentation method is sometimes prone to incorrectly

identifying image edges as part of the lesion. You can see an example on image below.

Example of segmentaion mask in CIELAB can be seen here:

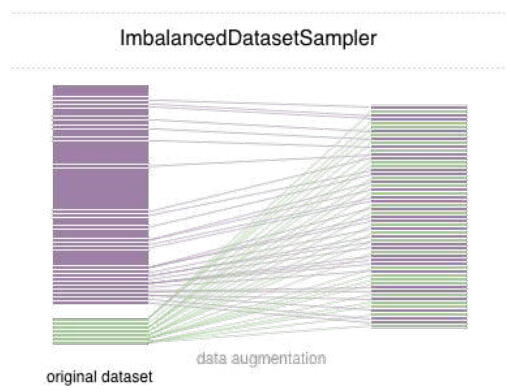

Data samplers

To address the strong class imbalance between benign and malignant lesions, several sampling strategies were tested, including a balanced batch sampler, imbalanced dataset sampler, and undersampler. The balanced batch sampler enforces equal class representation per batch but risks overfitting due to repeated minority samples, while the undersampler may cause information loss by discarding valuable benign data. The ImbalancedDatasetSampler provides a more dynamic solution, automatically reweighting samples during training to maintain balance without duplicating data, thus improving robustness when combined with augmentation.

Loss functions and class-weighting

In melanoma classification, where the ISIC 2020 dataset exhibits a severe 49:1 benign-to-malignant imbalance, we applied both sampling and loss-based strategies to ensure the model effectively learns from minority (malignant) examples. Standard cross-entropy loss was adjusted using Inverse Frequency Weighting (IFW) to counter class bias, and further extended to a recall-based loss, dynamically scaling class weights according to the model’s recall over time to balance precision–recall trade-offs. Additionally, Online Hard Example Mining (OHEM) and Focal Loss were explored to prioritize hard or misclassified samples, reducing the dominance of easy negatives and improving learning stability in the presence of extreme class imbalance.

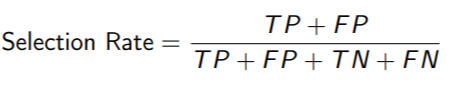

Evaluation metrics

In melanoma classification, traditional performance metrics such as accuracy, precision, recall, F1-score, false positive/negative rates (FPR/FNR), specificity (TNR), and balanced accuracy are used to evaluate overall model quality, especially under class imbalance. However, to assess fairness across skin tone groups, additional fairness metrics are introduced. These include the Selection Rate, which measures how often samples are predicted as malignant across groups; the Demographic Parity Difference (DPD), which quantifies disparities in positive prediction rates; Equalized Odds, which ensures both TPR and FPR are similar across groups; and Equal Opportunity, a relaxed version focusing only on equal TPR, prioritizing fair detection of malignant cases even at the cost of increased false positives.

Demographic parity difference:

Selection rate :

Equal opportunity:

Equalized odds:

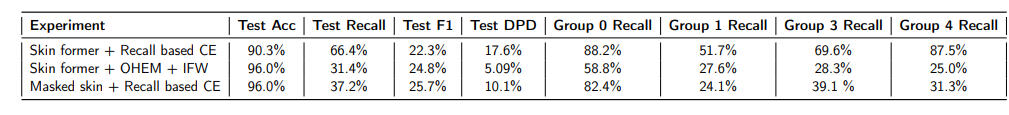

Results

Baseline model

The baseline model is a ConvNeXt-Tiny architecture, which has 28 million parameters and 4.5

GFLOPs. We use the variant pretrained on ImageNet-22k and fine-tuned on ImageNet-1k with

an input resolution of 224×224. We applied augmentations to the malignant subclass, used a

learning rate of 5e-5, and set the weight decay to 5e-3. The model was trained for 10 epochs

using RGB images and inverse frequency weighting with the standard cross-entropy loss.

CIELAB colorspace results

All experiments used the ConvNeXt Tiny model with the AdamW optimizer, cosine scheduler, 224×224 input resolution, and CIELAB normalization based on ISIC Challenge dataset statistics. Results showed a 5.8% recall improvement in the CIELAB space (from 50.4% to 56.2%), while the F1 score remained below 30%. Although higher recall correlated with lower DPD, Groups 1 and 3 still lagged behind, indicating the need for further recall and fairness improvements in upcoming experiments.

Segmentation-aided classification and skin-former results

The ConvNeXt-Tiny model was tested on both segmented and color-transformed (skin-former) images to assess its robustness to input variations. The skin-former approach outperformed masked transformations, achieving higher recall (+9.5% vs. EfficientNetV2-M) and fewer false positives, while masked images caused instability due to data distribution shifts from ImageNet pretraining.

Domain-aware training

Using skin-former augmentation and domain-aware training, the domain-discriminative setup achieved the best performance, with an overall recall of 74.5% and all but one skin tone group exceeding 80% recall. In contrast, domain-independent training underperformed, showing very low recall due to overpredicting benign cases. Although this approach reduced the F1 score to 17.4%, the trade-off was considered acceptable given the dataset’s 49:1 class imbalance, prioritizing higher recall over precision.

Fairness analysis

The fairness analysis revealed a trade-off between recall and F1 score. As models achieving higher recall often did so at the cost of more false positives and lower precision. Strict thresholds were applied, excluding models with FNR or FPR above 50%, recall below 50%, or F1 below 20%, leaving one best-performing model per architecture. The domain-discriminative ConvNeXt-Tiny achieved the highest recall (72.3%), though it also had the highest DPD (18.9%), indicating greater disparity across skin tone groups. Overall, DPD ranged from 8–19%, showing a modest fairness gap, while models with higher recall generally exhibited lower equal opportunity scores and higher selection rates, emphasizing the inherent recall–fairness trade-off.

Group-level metrics

The per-group analysis showed clear gaps in recall between lighter and darker skin tones, with the earlier RGB and CIELAB models reaching as much as a 31% difference between groups. The EfficientNet-M model narrowed that gap to about 14% while still keeping false negatives at a reasonable level. The Skin-Former and especially the domain-discriminative ConvNeXt-Tiny models stood out, delivering the highest recall across every group, with the latter achieving at least 62% recall even for the most challenging cases. Overall, the domain-discriminative model proved to be the fairest and most reliable, offering strong recall consistency with only minor increases in false positives, which is a an acceptable compromise when the main goal is to avoid missing malignant cases.

Statistical fairness estimation

The statistical fairness analysis evaluated image-level confidence across skin tone groups using posterior probabilities of the malignant class from the three best-performing models. ANOVA tests on all ground-truth malignant samples showed significant disparities in Experiments 1 and 3 (p < 0.05), indicating unequal confidence across skin tones, while the domain-discriminative model (Experiment 2) maintained consistent confidence despite dataset imbalance. When testing only correctly classified malignant samples, no significant bias was observed (p > 0.19 for all), suggesting models were equally confident when correct, though overall confidence bias persisted in the first and third experiments. These results highlight that domain-discriminative training offers the most stable and fair performance at both the group and image levels.

Conclusions

After extensive experimentation focused on both performance and fairness, the final model selected was ConvNeXt-Tiny, trained on 224×224 CIELAB images using domain-discriminative training and inverse frequency-weighted cross-entropy loss. It achieved 72.3% recall, 78% mean per-group recall, 21.5% F1 score, 80.81% balanced accuracy, and a DPD of 18.8%, with no evidence of image-level bias. This model demonstrated the most consistent and fair performance across all skin tone groups and was therefore chosen as the final submission.Models are publicly available on HuggingFace: https://huggingface.co/Mhara/melanoma_classification

Done while @University of Zagreb, FER with Duje Štolfa

Automatic Label Error Detection Using Uncertainty Quantification

Recreated original article. Found some inconsistencies. Idea was to use NormalizigFlows but later switched to another project

Introduction

Definition of label errors is connected components of a predicted class that, given the image context, should be contained in the ground truth but are not. Detecting these false positive regions is important for improving model reliability through data sanitization. To address this, a meta-classifier can be trained to identify and score potential errors by comparing the ground truth with model predictions, flagging suspicious regions for review.

Proposed method

The proposed method introduces a novel approach for detecting label errors in semantic segmentation by analyzing connected components within predicted segmentations. Each potential label error is evaluated using a meta-classifier trained on handcrafted features such as entropy, probability differences, intersection over union (IoU), component size, and geometric distances.The authors use DeepLabV3+ with a WideResNet38 backbone and NVIDIA’s multi-scale HRNet-OCR model, trained on Cityscapes under varying label perturbations (0, 0.1, 0.25, 0.5). Two evaluation modes are tested: one with clean training data and permuted test data, and another where both training and test data share similar corruption levels. .Finally, the method is applied to multiple datasets, where manually validated samples confirm high precision in detecting true labeling errors.

(In our case, DeeplabV3+ with ResNet101 achieved a mean IoU of 76.2%, compared to 83.5% for the state-of-the-art and 79.37% reported by the authors.)

My results

Our DeepLabV3+ (ResNet101) model achieved stable performance across all perturbation levels, with meta-classifier accuracy around 80–83%. While overall recall and F1 scores were lower than those reported in the original paper, the model maintained consistent detection behavior, indicating reliable though less sensitive label error identification. These results confirm potential, but little answers as to why did we see discrepancy compared to original paper.

Example or label-eror can be seen in this iamge. Upper image show connected components differing from our model compared to annotation. Bottom-left is annotation and bottom-right is segmentation mask of our model.

Done while @University of Zagreb, FER under supervision of prof.dr.sc. Siniša Šegvić and Anja Delić.

Semantic Segmentation of Satellite Images

Introduction

Image segmentation is a key task in computer vision that involves dividing an image into meaningful regions and assigning class labels to individual pixels. Three main forms are semantic, instance, and panoptic, semantic is the fundamental, enabling dense, pixel-level understanding of visual scenes.Due to pixel level operations, it remains computationally demanding due to the need for high-resolution, densely labeled datasets, motivating the use of optimized architectures for efficient feature extraction and inference.

DeepGlobe land cover classification dataset

The DeepGlobe 2018 dataset was designed for three remote sensing challenges: road extraction, building detection, and land cover classification. The land cover task is a multi-class segmentation problem involving seven surface types: urban, agricultural, rangeland, forest, water, barren, and unknown.Dataset has 803 high-resolution RGB satellite images (2448×2448 pixels, 50 cm per pixel) . Example below is a original image (left) and pixel level annotation mask (right).

Methodology

MagNet

ref:https://arxiv.org/pdf/2104.03778

The MagNet architecture operates as a multi-stage, multi-scale segmentation pipeline that progressively refines image predictions from coarse to fine detail. An input image X ∈ ℝ^(H×W×3) is too large to process directly, so it is divided into patches at multiple scale levels s = 1 … m, where each scale has dimensions hₛ × wₛ determined to span the full resolution range of the image.At every scale s, the model performs the following sequence:1. Extract a patch Xₚˢ and its corresponding segmentation prediction Yₚ^(s−1) from the previous stage.

2. Downsample both to fit GPU memory.

3. Use the segmentation network to predict a new, scale-specific map Ōₚˢ.

4. Pass both Yₚ^(s−1) and Ōₚˢ into the refinement module, which selectively replaces uncertain regions in Yₚ^(s−1) based on a confidence score Q, producing an updated map Ȳₚˢ.

5. Finally, upsample Ȳₚˢ back to its original resolution hₛ × wₛ × C, yielding a refined segmentation map Yₚˢ.Through these iterative refinements across scales, MagNet incrementally improves segmentation accuracy by combining global context from coarser levels with local detail from finer ones.

The refinement module is designed to improve segmentation accuracy at each scale by merging information from previous and current predictions.It receives cumulative segmentation map from earlier scales and the scale-specific map from the current stage and combines them into an updated output.The module estimates pixel-wise uncertainty for both maps to identify regions where the previous prediction is uncertain but the new one is confident. These regions are then selectively refined, ensuring that only the most reliable local corrections are applied.

SwiftNet

ref:https://arxiv.org/abs/1903.08469

The proposed segmentation method is built around three basic building blocks that form the foundation of the model.The first building block, the Recognition Encoder, uses ImageNet-pretrained models such as ResNet-18 and MobileNet V2, chosen for their efficiency, moderate depth, and real-time operation.The second building block, the Upsampling Decoder, restores the coarse visual features produced by the encoder back to the input resolution through a sequence of ladder-style upsampling modules with lateral connections and 3×3 convolutions, ensuring accurate reconstruction of spatial details.The third building block, the Module for Increasing the Receptive Field, enhances contextual understanding using spatial pyramid pooling (SPP) or pyramid fusion, allowing the model to capture multi-scale representations and maintain real-time performance without losing spatial resolution.

Single-scale model processes an input image through a downsampling encoder and an upsampling decoder to produce dense semantic predictions. The encoder gradually reduces spatial resolution and increases feature depth, while the spatial pyramid pooling layer expands the receptive field before decoding. The decoder restores resolution using bilinear upsampling, lateral feature summation, and 3×3 convolution blending, maintaining constant feature dimensionality throughout the reconstruction.

Interleaved pyramid fusion model enhances the receptive field and reduces capacity demands by applying two encoder instances to different levels of an image resolution pyramid. These encoders share parameters, allowing recognition of objects at multiple scales using a common feature representation. Their interleaved features are concatenated and projected into the decoder space through 1×1 convolutions, after which the decoder reconstructs the final segmentation using the same upsampling process as the single-scale model, extended with additional modules for each pyramid level.

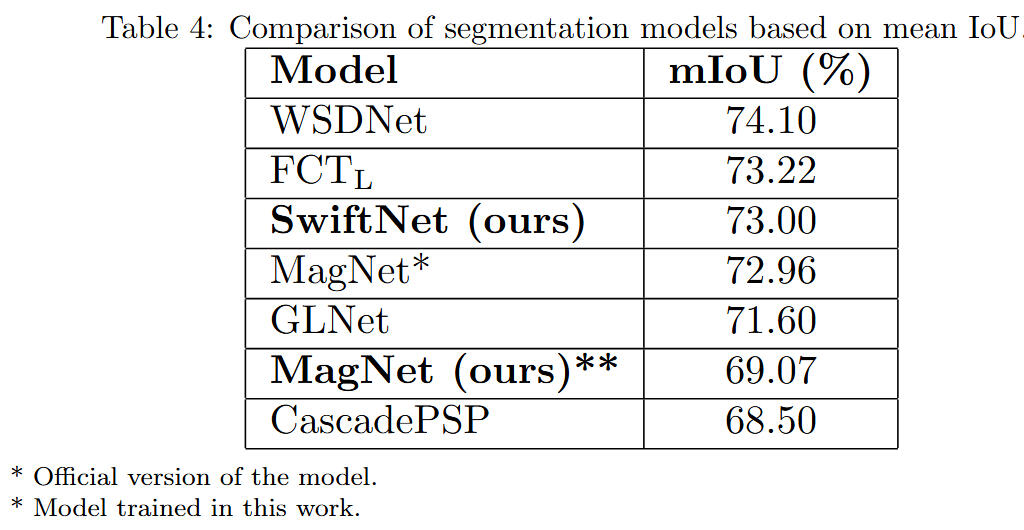

Results - MagNet

I could not fully recreate original papers result of ResNet50 (reported model in backbone column), as seen in table. Base model trained performed 3pp less in IoU than reported model. Using IFW did not help, actually model performed worse. Using OHEM insignificantly helped the model.

Segmentation examples - MagNet ResNet50

From left to right: RGB image, annotation mask, coarse segmentation, refinement module.

Results - SwiftNet

Single-scale Resnet18 performed best on this task with 73% mIoU performance. Interleaved-pyramid SwitNet version did not seem to enhance results.

Segmentation examples - SingleScaleResnet18

From left to right: RGB image, annotation mask, segmentation prediction.

Conclusions

The experimental results demonstrate that the single-scale SwiftNet model outperformed the interleaved pyramid fusion and MagNet varianta within this domain, achieving a mean IoU of 73.00%.Despite its simpler architecture, the single-scale approach provided more stable and accurate segmentation results across various configurations. On the evaluated benchmark, SwiftNet ranked as the third-best performing model overall, closely following WSDNet and FCTL, confirming its effectiveness and competitive balance between accuracy and efficiency.

Done while @University of Zagreb, FER under supervision of prof.dr.sc. Siniša Šegvić and Marin Kačan

Backdoor (Cyber) Attacks and Defenses on Deep Learning Models

Introduction

A backdoor attack on a deep learning model inserts a pattern into some training images so that the model behaves normally on clean inputs but misclassifies any input that contains the pattern. The idea is simple, we add a small random pattern to a subset of images and perturb the labels for those images. Even a small number of poisoned examples, meaning image label pairs with altered labels, can enable a successful attack in production.

Below is a simple example of a poisoned image. Multiple image transformations can serve as target transformations that an attacker introduces to induce the model to misclassify during inference.

We have explored both attacks and defenses in this work, as researchers are often divided in their focus between these two areas. In the following sections, we will introduce the attacks and defenses we implemented.

BadNets attack

Reference: https://arxiv.org/pdf/1708.06733

We instantiate the BadNets training time backdoor by poisoning a fraction (p) of the MNIST training set with backdoored copies, each stamped with either a single bright pixel or a small pattern in the bottom right corner.

We then retrain the baseline CNN so that attacker specified target labels for single target and all to all variants become associated with the trigger, and the resulting model preserves high accuracy on clean inputs while reliably producing the attacker chosen labels for triggered inputs.

Data Poisoning attack

Reference: https://arxiv.org/pdf/1712.05526

Data poisoning is a method where an attacker inserts a small set of training examples stamped with a secret trigger and labeled with the attacker’s chosen target. During training the model learns this hidden association, so at test time any input containing the trigger is misclassified as the target label while clean inputs still look normal. Below is example of rather large sunglasses backdoor example, can you see it?

Fine-pruning defense

Reference: https://arxiv.org/pdf/1805.12185

Fine-Pruning is a defense method designed to remove backdoors from deep learning models by selectively pruning and retraining the network. In this approach, specific layers that most strongly respond to the backdoor trigger are pruned, effectively “unlearning” the malicious behavior learned during poisoning. After pruning, the model undergoes fine-tuning on clean data to recover its normal accuracy while keeping the attack success rate low. This process aims to preserve performance on legitimate inputs while eliminating the attacker’s hidden influence.

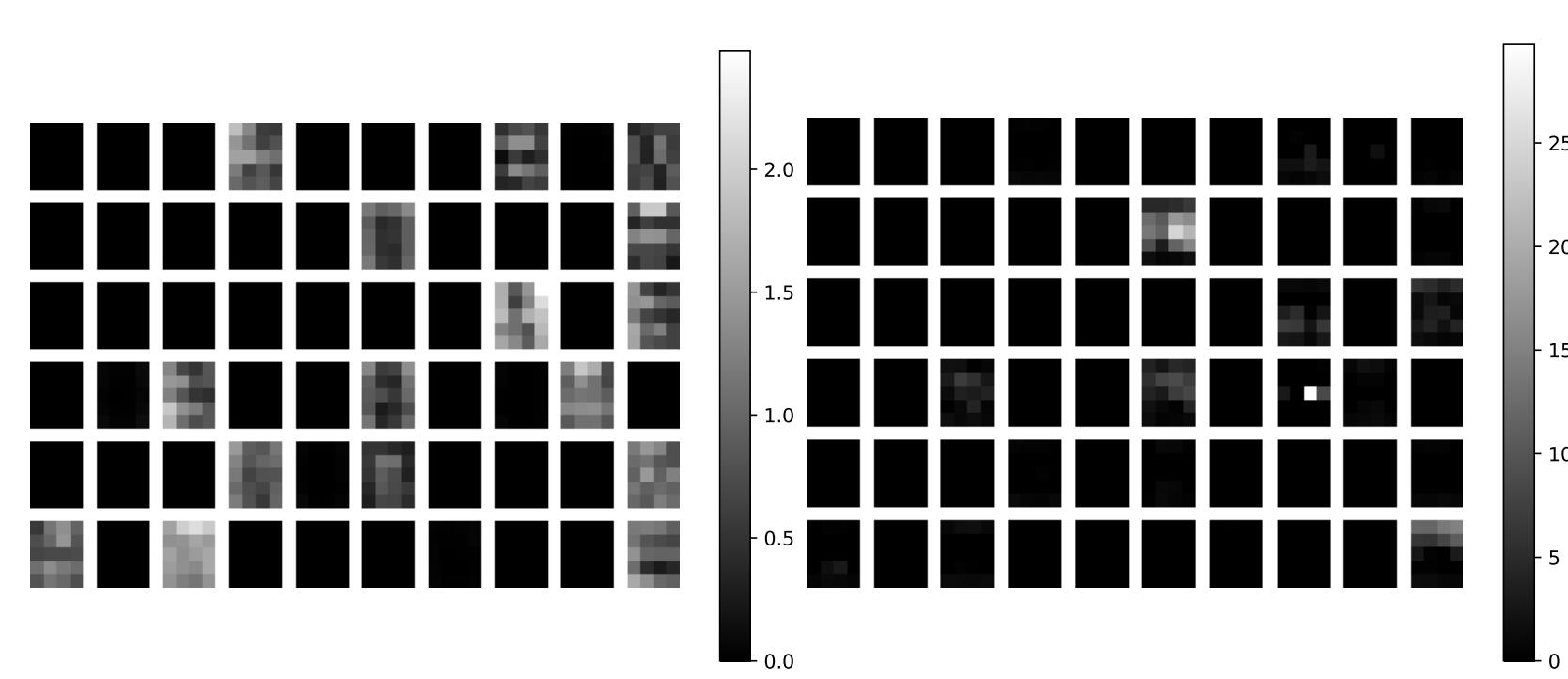

Below is example of activations form last convolutional layer for clean (left) and backdoor (right) activations/images. We observe different patterns captured in this layer, usggesting this could be the differentiator whose pruning would lower success rate.

Neural Cleanse

Reference: https://ieeexplore.ieee.org/document/8835365

Neural Cleanse is a defense method against backdoor attacks in deep learning models that uses reverse engineering to identify hidden triggers and an unlearning algorithm to mitigate the attack.

It first detects whether a model is poisoned and identifies the target label by generating potential reverse triggers for each class.

Then, outlier detection is applied using an anomaly index to find the true trigger and the corresponding target label.

Finally, the identified trigger is used during the unlearning phase, where the model is retrained to forget the malicious association and correctly classify inputs even when the trigger is present, thuis effectively neutralizing the backdoor.

Jittering

Reference: https://proceedings.neurips.cc/paper_files/paper/2022/file/3f9bbf77fbd858e5b6e39d39fe84ed2e-Paper-Conference.pdf

Jittering is a defense method against backdoor attacks that improves the robustness of deep learning models by adding a small amount of random noise to input data. During prediction, the inputs are slightly modified with random variations, which disrupt hidden patterns that could activate a backdoor trigger. In training, jittering helps the model learn to ignore minor random changes in the input, making it more resistant to malicious triggers and reducing the chance of incorrect classifications caused by poisoned samples.

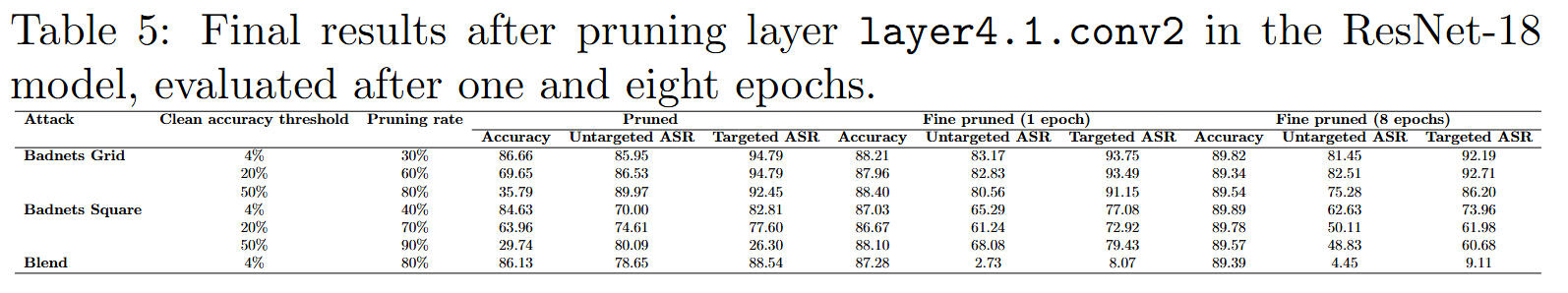

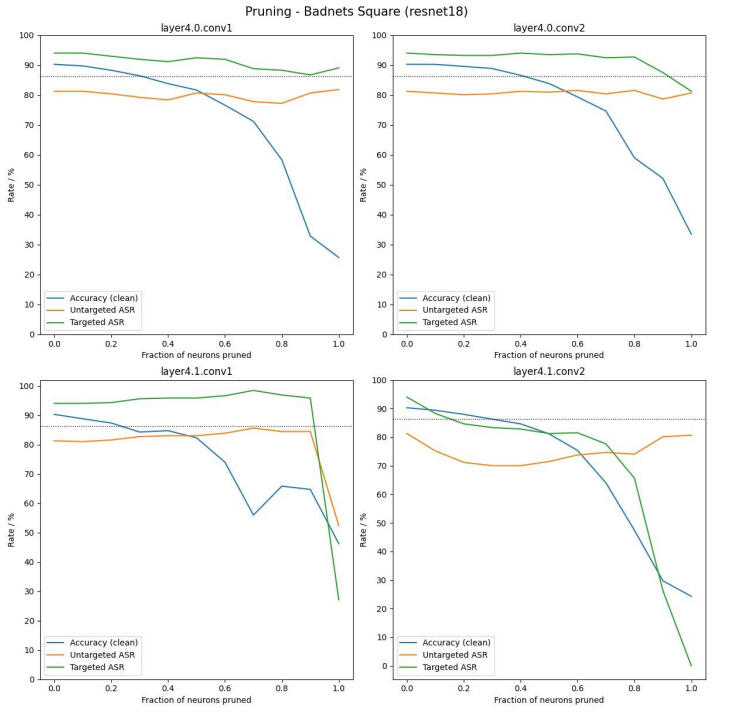

Results - fine-pruning

Based on the results, pruning the layer4.1.conv2 layer proved to be the most effective for observing and reducing the impact of targeted backdoor attacks. The Fine-Pruning defense successfully decreased the Attack Success Rate (ASR), most notably for the Blend attack, where the targeted ASR dropped to 9.11 percent while maintaining 89.39 percent accuracy on clean data. However, the Badnets attacks remained largely effective, indicating that this defense is partially successful and more efficient against certain trigger types than others.

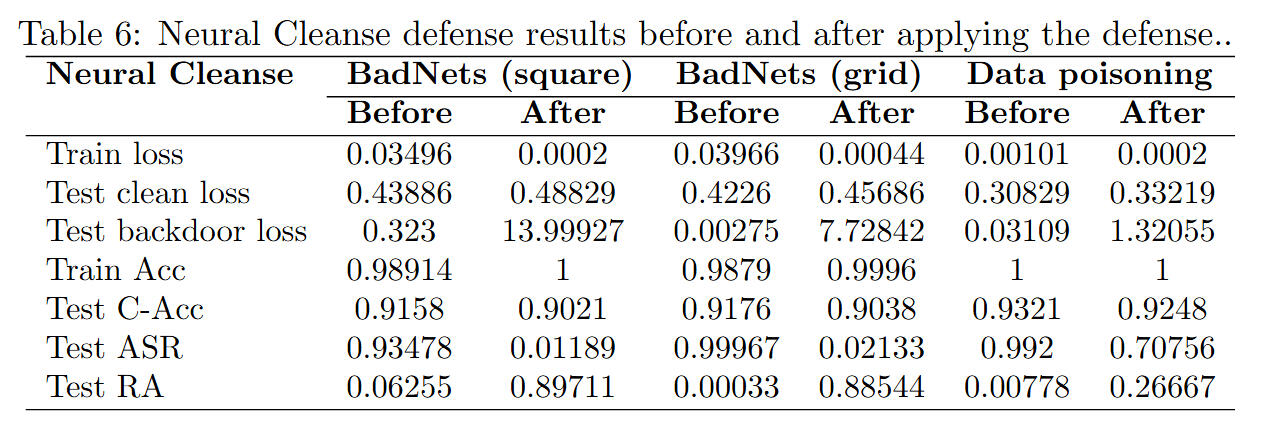

Results - neural cleanse

The Neural Cleanse defense proved highly effective against Badnets attacks, significantly reducing the attack success rate (ASR) to 1.19 percent for the square trigger and 2.13 percent for the grid trigger, while maintaining over 90 percent accuracy on clean data.

However, its performance against the Blend attack was notably weaker, with the ASR remaining at 70.76 percent, indicating that this type of trigger is more resistant to the defense process.

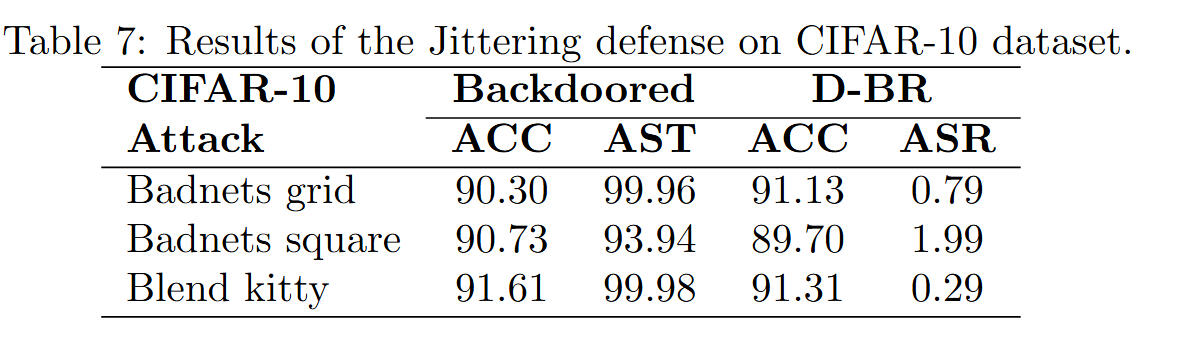

Results - jittering

Jittering was applied to three poisoned datasets generated by the Badnets grid, Badnets square, and Blend kitty attacks using the CIFAR-10 dataset and a ResNet-18 model.

Results show that the attack success rate decreased for all three attacks, with an improvement in accuracy for the Badnets grid case after defense.

However, the defense achieved the weakest performance against the Badnets square attack, where model accuracy dropped slightly below 90 percent and the ASR remained close to 2 percent, suggesting further optimization is needed.

Conclusions

This project demonstrated that backdoor attacks such as Badnets and Blend can effectively manipulate deep learning models through subtle data poisoning, while maintaining high accuracy on clean data. Among the tested defenses, Fine-Pruning and Neural Cleanse proved most effective against structured attacks like Badnets, significantly reducing the attack success rate with minimal impact on model performance. However, defenses like Jittering and Neural Cleanse were less successful against complex triggers such as Blend, suggesting need for generalized defense strategies .

Team leader of 9 member team @University of Zagreb, FER under supervision of prof.dr.sc. Siniša Šegvić and Ivan Sabolić.

Polyphonic Audio Classification

Introduction and conclusion

My very first "big" machine learning project, done for a LUMEN competition while on 2nd year of studies @FER. Looking back, it isn't reallygood, but kept it as a reminder.

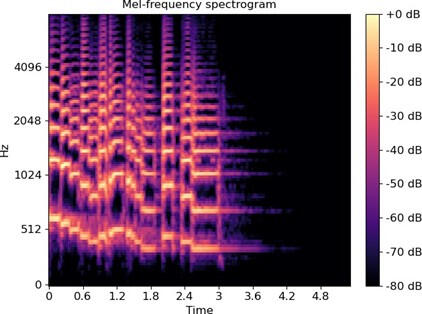

We used the IRMAS dataset, which consists of various instrument sounds. We extracted mel-spectrograms, chromatograms, and spectral contrasts from one-second snippets and fed them into our convolutional neural network (architecture we built).Below are images of the mel-spectrograms, chromatograms, and spectral contrasts, in that order.

On IRMAS test-set we achieved F1 score of 60% and Haamming accuracy of 90%.